data forensics workshop introduction atelier nord 6 nov 2008

Table of Contents

Data forensics [in the landscape]

background/abstract/definition

Any time a machine is used to process classified information electrically, the various switches, contacts, relays, and other components in that machine may emit radio frequency or acoustic energy.

… This problem of compromising radiation we have given the covername TEMPEST.

[TEMPEST: A Signal Problem. NSA 1972]

- key elements - a classified information source (that which must be

kept hidden), and compromising radiation - also entry of intention - this information is not MEANT to be revealed

TEMPEST (before we arrive at DF) as opening the world as information, as becoming SIGNAL and meaning (cf. SETI)

__

Expanding a clear concern with electromagnetic [EM] phenomena as a question of substance, data forensics extends the spectrum of artistic concerns to embrace data space. Under the mastheads of signal and noise, data, or digital, forensics proposes a further examination of contemporary presence, a materiality and architecture seemingly at odds with a digital realm which humbles its carrier, submitting the aether to status of a lowly medium; that which is modulated.

Data forensics in this instance refers to the artistic and practical analysis of [un]intentional signals, raising questions of both this intention and a modelling of the world.

Data forensics extends a series of practical and theoretical investigations concerned with electromagnetic [EM] phenomena into the world of data space. Spanning signal and noise, digits and decay, data forensics explores the often unintuitive reality that digital data has its own electromagnetic (physical) presence, a physicality that can be read and perhaps even modulated through the carrier medium itself.

___

Data forensics presents a look-glass exchange between two domains. The digital informs and reveals the physical and vice versa; equally, there is an exchange of practices. For example, we can borrow techniques from real-world forensics, gumshoe-style to examine and attempt to make sense of leaked data emissions, or to expose and elaborate a notion of data sedimentation; examining an archaeology of everyday digital usage. At the same time, technological practices inform traditional forensics, as alternative architectures, ancient constructions and intensities, are revealed by novel, EM-inspired techniques.

Sub-topics under the broad heading of data forensics could include:

making sense of landscape from a forensics perspective, reverse engineering, mapping protocols of hiding onto the real, photo and audio reconnaissance, data sedimentation, data visualisation, TEMPEST, cryptography, mapping of event intensity using GPS, signals, noise and strategies for interpretation of the intentionality of transmissions

defining our parallel

defined during working groups at Peenemuende - a place where we were precisely concerned with both hiding (that which is hidden (in language)) as a philosophical concern, and the overlap of a conventional idea of landscape with digital projection (simulation and description); the contours of an other environment.

PM:

… yes the “Allied” planes all would have been, ultimately, IG-built, by way of Director Krupp, through his English interlocks—the bombing was the exact industrial process of conversion, each release of energy placed exactly in space and time, each shock-wave plotted in advance to bring precisely tonight's wreck into being thus decoding the Text, thus coding, recoding, redecoding the holy Text…

[GR. p. 521]

Divining and providing ideas for a future (data) archeology. Key concepts include physical data sedimentation, decoding and paranoia, cryptography. techniques for the examination of promiscuous data leakage, and making sense of/within a landscape.

At the same time, the workshop provoked an examination and critique of pervasive surveillance technologies; it is worth noting that parallel to the development of space technology, the first CCTV system was installed at Test Stand VII in Peenemünde in 1942. Aerial reconnaissance of the site also stands as an important test case within this field.

An obvious relationship with media archeology and a question of this parallel of stratification, sedimentation and landscape with a so-called data-space. Links also to the practice of photo reconnaissance in which PM is noted as a test case.

philosophy (inc. TEMPEST)

Data Forensics as we understand it within the context of the workshop has deep roots in the tight network of concerns trafficing under the covername of TEMPEST:

what is TEMPEST

refers to the study and potential control of compromising emanations (CE) which can be used to recover the plain text (or other information) of encoded/national security related nature. CE potentials include acoustic, and electromagnetic radiation [including in this wide spectrum visible light (optical emanations)]

Compromising emanations are [ thus] defined as unintentional intelligence-bearing signals which, if intercepted and analyzed, may disclose the information transmitted, received, handled, or otherwise processed by any information-processing equipment.

[Wikipedia - note the relation to intentionality and intelligence]

TEMPEST is a form of side-channel attack [In cryptography, a side channel attack is any attack based on information gained from the physical implementation of a cryptosystem, rather than brute force or theoretical weaknesses in the algorithms … For example, timing information, power consumption, electromagnetic leaks or even sound can provide an extra source of information which can be exploited to break the system.[Wikipedia]]

_

recursion - a codename which reveals nothing but is precisely concerned with revelation (a potential fake or red herring)

a (paranaoic) understanding of the world and (comic) model for perception:

TEMPEST as a reflective model…

for "perception", as a reflexive model for consciousness/rationalism

- each stage of the model examined: -detection -decoding -reconstruction

- making of a model

TEMPEST as being revealed (the already signalled or encoded). Model revelation (pink light).

conceptual/practical intertwined

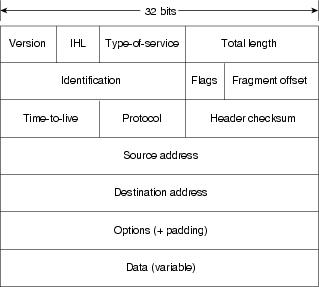

OSI [Open Systems Interconnection] as a model of abstraction and encapsulation (the packet).

from (never bare) detection (the detective) ascending layers of exposure - examining different vectors of revelation (what such and such an investigation reveals about the potentially meaningful, however trivial, message/signal)

a transition from the (so-called) physical layer to the protocol layer - a transition of great philosophical import.

OSI model - a division

| spectrum analysis | "physical" |

| –—————————— | |

| protocol analysis | abstraction |

marking this division is the physicality of the antenna and RSSI. a direction into architecture. antenna|protocol

demodulation also as a bridge (removal of the carrier)

modulation schemes

Analogue

- AM

- FM

- SSB - single sideband

- PM

- SM

Digital

- Orthogonal frequency-division multiplexing [OFDM]

- Phase-shift keying [PSK]

and many more

example

what is 802.11 encoding/modulation?

one aspect is spread spectrum (Hedy Lamarr):

Frequency-hopping spread spectrum (FHSS) is a method of transmitting radio signals by rapidly switching a carrier among many frequency channels, using a pseudorandom sequence known to both transmitter and receiver.

modulation in this case is: Gaussian Frequency-Shift Keying (GFSK) is a type of Frequency Shift Keying modulation that utilizes a Gaussian filter to smooth positive/negative frequency deviations, which represent a binary 1 or 0. It is used by DECT, Bluetooth1, Cypress WirelessUSB, Nordic Semiconductor and z-wave devices. For Bluetooth the minimum deviation is 115 kHz.

http://en.wikipedia.org/wiki/GFSK

See also:

http://en.wikipedia.org/wiki/OFDM

and:

abstraction

[in an outside world]

what/where is abstraction in the world except as language and construction in the world

question of if this layered OSI model could (or would have already) enter(ed) the world.

physical layer - identifiable only as such

physical layer is a red herring as OSI model "in the world" bites its own tail. simulation theory

- how detection/collection qualitatively determines the data set for analysis (time, stripping of protocols and layers by the OS or routing hardware)

detection is thus not transparent

taxonomy

To imagine some kind of taxonomy or system of classification - exploratory tools, active and passive probes, reconstruction, interpretative, visualisation, decryption and encryption, a beginning to making sense.

That this taxonomy could also follow and to some extent critique (or allow for a philosophical examination of) the OSI model of networking:

technical/practical and demonstrations

detection and collection

analysis

interpretation

making sense

visualisation/reconstruction

physical experiments - a question of frequency

Ascending the model and revealing the physicality of signals/systems of communication. A successive revealing of information regarding the meaning of such signals.

At the same time how each stage/experiment reveals something about the science and technology. The construction and use of the device allows us to examine and formulate ideas within this language (eg. frequency, field). Questions concerning the philosophy of physics:

demonstrations of simple interlinking

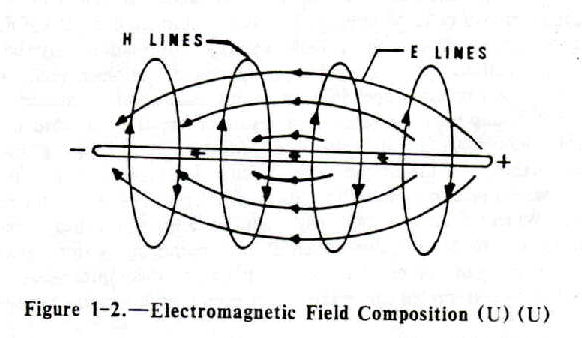

electricity and magnetism and related. field theory!

static, magnets, current

what is out there?

[mobile phones, DECT, 802.11(bg etc.), RFID, wideband emissions, 50 Hz power lines, broadcast radio and digital TV (DVB), radar, natural radio]

and how it interacts with bodies/architecture/materials/flux

TEMPEST AM radio 10 MHz

modulation

bare presence

detection using simple amplifier. low frequency. direct translation into sound waves. antennas

antenna as bridge between domains

cantenna (waveguide antennas)

http://www.turnpoint.net/wireless/cantennahowto.html

http://flakey.info/antenna/waveguide/ [with calculator for waveguide distances and so on]

[use of tetrapaks was also successfully tested in Berlin]

http://www.reseaucitoyen.be/wiki/index.php/BoiteDeLait

http://www.reseaucitoyen.be/wiki/index.php/Home_made - good resource

http://www.saunalahti.fi/~elepal/antenna2.html - also with link to calculator

use of the AD8313/8

High Frequency (100 MHz-2.4GHz) receiver based on Analog Devices AD8313.

logarithmic detectors. different parts of the frequency spectrum

full frequency - light

- small router or switch analysis with photodiode and amplifier

Simple C code for phototransistor TEMPEST using BPW42 and parallel port

:./temppar <delay>

examination of signal strength

(RSSI - Received Signal Strength Indication). What this tells us about the signal - which frequencies:

wavemon, xoscope, iwspy:

http://users.skynet.be/chricat/signal/signal-strength.html

see also:

http://www.1010.co.uk/scrying_tech_notes.html

visualisation now enters the picture

introduction to scrying platform

- mapping signal strength

modules, mapping, mobile code execution, LRA

spectral analysis [beginning interpretation]:

baudline, wi-spy http://www.kismetwireless.net/spectools/

bridging the protocol divide

USRP/software defined radio: spectrum analysis, AM receiver, TV, plotting (where is plotting stuff: http://www.1010.co.uk/org/notes.html#sec-7)

examples on that page

grc as patching app

Into demodulation and decoding - bbn-examples - wireless signals

above the physical layer - packets

what is out there?

and how we can examine and make sense of it

kismet:

Kismet is an 802.11 layer2 wireless network detector, sniffer, and intrusion detection system. Kismet will work with any wireless card which supports raw monitoring (rfmon) mode, and can sniff 802.11b, 802.11a, and 802.11g traffic.

Kismet identifies networks by passively collecting packets and detecting standard named networks, detecting (and given time, decloaking) hidden networks, and infering the presence of nonbeaconing networks via data traffic.

the packet: network theory (TCP/IP)

protocol dissection

wireshark, tcpdump, arpwatch, pypcap, airsnort

Wireshark is a GUI network protocol analyzer. It lets you interactively browse packet data from a live network or from a previously saved capture file. Wireshark's native capture file format is libpcap format, which is also the format used by tcpdump and various other tools.

netstat, netcat (nc), ntop, nmap, ngrep, tcpdump, snort, hping2, dsniff

construction of signals and packets

scapy

Scapy is a powerful interactive packet manipulation program. It is able to forge or decode packets of a wide number of protocols, send them on the wire, capture them, match requests and replies, and much more. It can easily handle most classical tasks like scanning, tracerouting, probing, unit tests, attacks or network discovery (it can replace hping, 85% of nmap, arpspoof, arp-sk, arping, tcpdump, tethereal, p0f, etc.). It also performs very well at a lot of other specific tasks that most other tools can't handle, like sending invalid frames, injecting your own 802.11 frames, combining technics (VLAN hopping+ARP cache poisoning, VOIP decoding on WEP encrypted channel, …), etc.

other topics

collection techniques

detection/collection/analysis at micro/macro levels eg. long term (using access points and batteries) or coarse grained analysis

other research vectors

serial port and USB sniffing, keyboards, hard drive (conventional digital forensics - dd, strings, AFF-enhanced forensics format), acoustic emanations, steganography (steghide,nlstego), timing attacks and entropy, memory, execution and gdb

raw file/data analysis - baudline (file open and bit view option), dd, strings, devdisplay, raw files in octave (histogram):

myfile = fopen("testrnd_delay_none", "r+")

x =fread(myfile, "uchar");

hist(x)

hist(x(1:50))

plot(x)

fclose(myfile)

reconstruction/visualisation

R, gnuplot, octave, matplotlib, basemap

see scrying examples

making sense

- mapping with GPS

- GeoIP maps: http://www.maxmind.com/app/c

- correlations

schedule

Day One:

Introduction all participants

Introduction to theory and techniques of data forensics: experiments, demonstrations, software, hardware - an overview.

Data collection expedition in Oslo city centre

Day Two:

Discussion of previous day’s research

Discussion of artist works within the field of data forensics

Assessment of relevant techniques by each participant

Commence personal projects in Oslo interior/exterior

Day Three.

Discussion of personal projects

Further collective experiments and suggestions for future work and direction

Sketches for artistic work

rough process:

1-background 2-collection 3-decoding and interpretation 4-visualisation/recoding 5-packet creation

references/collected resources

Footnotes:

1 FOOTNOTE DEFINITION NOT FOUND: 1

Date: 2009-12-31 15:37:42 GMT

HTML generated by org-mode 6.31trans in emacs 23